Modern AI systems are moving beyond single prompts and simple workflows. Today, teams are building tools where multiple AI agents plan, reason, and work together. That’s why agent frameworks have become so important.

When engineers compare Autogen vs LangChain vs CrewAI, they’re really trying to understand how different AI agent orchestration tools handle real-world complexity. Each framework approaches problem-solving in its own way, some focus on flexible chains, others on structured collaboration or agent-to-agent communication.

This AI agent framework comparison guide explores how these tools fit into modern applications, from automation to decision systems.

By looking at Autogen framework features, LangChain framework use cases, and CrewAI framework architecture, teams can choose the best AI agent framework in 2026 for building scalable, production-ready systems.

AI agent frameworks are software layers that help engineers build systems where AI models don’t just respond to prompts but actively plan, decide, and take actions.

Instead of a single LLM handling everything, these frameworks allow multiple agents to work together, each with a clear role, such as planning, execution, validation, or coordination.

For engineers, this solves a practical problem. Real-world AI applications often need memory, tools, APIs, and decision logic working together. Writing all of this from scratch quickly becomes messy. AI agent frameworks provide structure for agent communication, task delegation, state management, and error handling. They act as orchestration layers between models, tools, and workflows.

Modern frameworks support both single-agent and multi-agent systems, making them useful for chatbots, data pipelines, autonomous workflows, and internal tools. Some frameworks focus on chaining tasks, while others emphasize agent collaboration and feedback loops.

As AI systems move toward autonomy, engineers are no longer choosing just a model, they’re choosing how intelligence is structured and coordinated. That’s where frameworks like AutoGen, LangChain, and CrewAI come in.

While all three help orchestrate AI-driven workflows, they solve different problems and reflect different design philosophies.

AutoGen focuses on agent-to-agent communication and conversation-driven execution.

LangChain is built around structured chains, tools, and integrations, making it highly flexible.

CrewAI, on the other hand, simplifies multi-agent collaboration by assigning clear roles and goals.

Understanding these differences early can save teams months of rework later.

AutoGen is designed for systems where multiple AI agents talk to each other to solve problems. Instead of a single agent calling tools in a fixed sequence, AutoGen allows agents to collaborate through conversations, much like humans would.

At its core, AutoGen uses conversational agents that exchange messages to plan, execute, and validate tasks. Each agent can represent a role such as planner, coder, reviewer, or executor.

The system progresses through dialogue, not rigid pipelines. This makes AutoGen feel closer to a distributed problem-solving system than a traditional workflow engine.

AutoGen also supports human-in-the-loop setups, where a developer or operator can step in during execution. This is useful for debugging complex tasks or handling edge cases.

AutoGen shines in complex reasoning and exploratory tasks.

It’s well-suited for problems where the path to the solution isn’t fixed in advance. Engineers like it because agents can critique each other, recover from errors, and adapt dynamically.

It’s especially powerful for research tools, autonomous coding assistants, simulations, and systems that require back-and-forth reasoning.

Multi-agent coding and code review systems

Research assistants that validate findings collaboratively

Autonomous debugging and problem-solving tools

Experiment-heavy environments where flexibility matters more than predictability

The tradeoff is control. AutoGen systems can be harder to reason about in production because conversations may evolve unpredictably if not carefully constrained.

LangChain is one of the most widely adopted AI frameworks because it provides structure without over-prescription. It helps engineers connect language models with tools, memory, data sources, and APIs in a clean, modular way.

LangChain is built around the idea of chains, sequences of steps that process inputs and produce outputs.

These chains can include prompts, tool calls, retrieval steps, and custom logic. Over time, LangChain expanded to include agents, memory modules, vector store integrations, and observability tools.

Unlike AutoGen’s conversational style, LangChain emphasizes explicit control. Engineers define how data flows and when tools are invoked.

LangChain’s biggest strength is its ecosystem. It integrates easily with databases, vector stores, cloud services, and external APIs.

This makes it ideal for real-world applications that need reliability, logging, and predictable behavior. It also has a lower learning curve for teams already comfortable with backend systems and APIs.

Retrieval-augmented generation (RAG) systems

Chatbots and internal assistants

Workflow-driven automation

Production systems requiring observability and stability

LangChain is often the default choice when teams need to ship fast and maintain control, even if it sacrifices some autonomy compared to agent-first frameworks.

CrewAI is built with one clear goal: make multi-agent systems easier to design and reason about. Instead of free-form conversations or deeply nested chains, CrewAI introduces structure through roles, tasks, and goals.

CrewAI organizes agents like a team. Each agent has a defined role, a responsibility, and a task to complete. A central orchestrator coordinates execution, ensuring agents work toward a shared outcome.

This approach feels intuitive to engineers because it mirrors how human teams operate. You don’t just have agents talking, you have agents working with intent.

CrewAI reduces cognitive overhead. Engineers don’t need to manage complex conversation flows or low-level orchestration. The framework handles coordination while still allowing agents to collaborate.

It strikes a balance between autonomy and control, which makes it appealing for teams experimenting with multi-agent systems for the first time.

Business process automation

Multi-step content generation

Research and analysis workflows

Task-oriented AI teams

CrewAI may not be as flexible as AutoGen or as mature as LangChain, but it offers clarity, something many teams value when scaling beyond prototypes.

Together, these frameworks represent three different answers to the same question: how should intelligent agents work together? The right choice depends less on hype and more on how much control, autonomy, and complexity your system actually needs.

When engineers search for Autogen vs LangChain vs CrewAI, they usually want a quick, no-nonsense comparison.

This section gives a high-level view of how these frameworks differ in philosophy, focus, and ideal use cases. It’s meant to help readers instantly understand where each tool fits in a multi-agent AI frameworks comparison before diving into technical details.

|

Factor |

AutoGen |

LangChain |

CrewAI |

|

Core Philosophy |

Conversation-driven agent collaboration |

Structured chains and tool-based workflows |

Role-based multi-agent teamwork |

|

Primary Focus |

Agent-to-agent communication and reasoning |

Orchestration of LLMs, tools, and data |

Simplified multi-agent coordination |

|

Best For |

Complex, exploratory, autonomous tasks |

Production-grade AI applications |

Task-oriented multi-agent workflows |

Architecture plays a major role in how AI agents behave in real systems.

This part of the AI agent framework comparison guide breaks down how each framework structures agents, manages orchestration, and balances control versus autonomy.

Understanding these differences is critical when evaluating CrewAI vs Autogen framework comparison from an engineering and scalability perspective.

|

Aspect |

AutoGen |

LangChain |

CrewAI |

|

Agent Structure |

Conversational agents interacting via messages |

Agents embedded within defined chains |

Agents with fixed roles and responsibilities |

|

Orchestration Style |

Dynamic, conversation-based flow |

Explicit, developer-defined execution flow |

Central orchestrator managing agent tasks |

|

Control Level |

Low to medium (more autonomy) |

High (predictable execution) |

Medium (structured autonomy) |

|

Human-in-the-Loop Support |

Strong support |

Optional, implementation-driven |

Limited but improving |

Not every team has the same experience level or timeline.

This section compares how easy it is to get started with each framework, how steep the learning curve is, and what kind of engineering effort is required.

It’s especially useful for teams deciding between LangChain vs CrewAI differences or evaluating which option fits their current skill set.

|

Aspect |

AutoGen |

LangChain |

CrewAI |

|

Learning Curve |

Steep for beginners |

Moderate |

Beginner-friendly |

|

Setup Complexity |

Medium to high |

Medium |

Low |

|

Code Predictability |

Lower due to agent conversations |

High due to explicit chains |

Medium |

|

Best for New Teams |

No |

Yes |

Yes |

Framework strength isn’t just about core features, it’s also about ecosystem maturity.

This table focuses on integrations, tooling, documentation, and community support, offering a practical AI agent orchestration tools comparison.

It highlights how Autogen framework features, LangChain framework use cases, and CrewAI framework architecture differ in real-world adoption.

|

Aspect |

AutoGen |

LangChain |

CrewAI |

|

Built-in Tooling |

Basic |

Extensive |

Limited but growing |

|

Third-Party Integrations |

Few |

Very strong (databases, APIs, vector stores) |

Moderate |

|

Community & Adoption |

Growing |

Large and mature |

Early-stage |

|

Documentation Quality |

Improving |

Strong and detailed |

Simple and readable |

Performance and scalability decide whether a framework can survive beyond prototypes.

This section compares how each option handles production workloads, observability, and long-term scaling.

For teams aiming to choose the best AI agent framework in 2026, this table provides a realistic view of production readiness and operational trade-offs.

|

Aspect |

AutoGen |

LangChain |

CrewAI |

|

Performance Predictability |

Variable |

High |

Medium |

|

Scalability |

Requires careful design |

Proven at scale |

Suitable for small–medium systems |

|

Observability & Debugging |

Challenging |

Strong tooling support |

Basic |

|

Production Readiness |

Experimental to semi-stable |

Production-ready |

Early production use |

When evaluating AutoGen, LangChain, and CrewAI, costs fall into three buckets: initial AI app development, ongoing maintenance, and scaling with usage. While all three frameworks are open source, the real cost comes from engineering time, infrastructure, and model usage.

Initial cost mainly depends on complexity and senior engineering effort.

LangChain: A production-ready system using LangChain typically costs $15,000–$35,000. Its mature ecosystem, built-in integrations, and predictable workflows reduce app development time.

CrewAI: CrewAI projects usually fall in the $20,000–$45,000 range. Role-based agent design speeds up multi-agent builds but still requires custom orchestration logic.

AutoGen: AutoGen-based systems often cost $30,000–$70,000+. Designing agent conversations, guardrails, and recovery logic requires senior engineers and more experimentation.

App maintenance includes bug fixes, prompt tuning, model updates, and monitoring.

LangChain: ~$1,500–$3,500 per month

CrewAI: ~$2,000–$4,500 per month

AutoGen: ~$3,000–$7,000 per month

AutoGen is costlier to maintain because agent behavior can shift as models change, requiring frequent tuning.

Scaling costs are driven by LLM API usage, agent count, and workflow depth.

Small-scale systems (low traffic): $300–$800/month

Mid-scale production systems: $2,000–$6,000/month

Large, multi-agent systems: $10,000+/month

AutoGen systems tend to scale more expensively due to longer agent conversations. LangChain scales most predictably, while CrewAI sits in the middle for task-based workloads.

While AutoGen, LangChain, and CrewAI unlock powerful AI agent capabilities, engineers often face real-world challenges when moving from prototypes to production. Most issues don’t appear in demos, they surface once systems grow in complexity, traffic, and business expectations.

One of the biggest challenges is controlling agent behavior. In AutoGen, agents communicate through conversations, which can lead to unpredictable execution paths if guardrails are weak.

Engineers must carefully design prompts, termination conditions, and fallback logic. Without this, agents may loop, overuse tools, or produce inconsistent outputs.

LangChain offers better control, but complexity grows as chains become longer and more interconnected. Debugging a failure deep inside a multi-step chain can still be time-consuming.

Debugging AI agent systems is fundamentally harder than debugging traditional code. Outputs can change with model updates, temperature settings, or prompt tweaks.

LangChain provides better observability tools, but AutoGen and CrewAI often require custom logging and tracing. Engineers frequently struggle to identify whether failures come from prompts, tools, APIs, or agent logic.

Another common challenge is performance tuning. Multi-agent systems can trigger multiple LLM calls per task, which increases latency and AI development cost.

AutoGen systems are especially prone to this if conversations are not tightly scoped. Engineers must actively optimize token usage, limit agent turns, and cache results to keep systems efficient.

What works for a small internal tool may not scale well. CrewAI systems can become rigid as workflows grow, requiring refactoring.

LangChain systems scale better, but only if chains are designed with modularity in mind. AutoGen requires the most effort at scale due to its dynamic nature.

Finally, there’s a learning curve. Many teams underestimate the skill level required to build reliable agent systems. Prompt engineering, system design, and AI safety considerations all matter. Without experienced engineers, projects risk becoming unstable or expensive to maintain.

In short, these frameworks are powerful, but success depends on thoughtful design, strong engineering practices, and realistic expectations about complexity and app development cost.

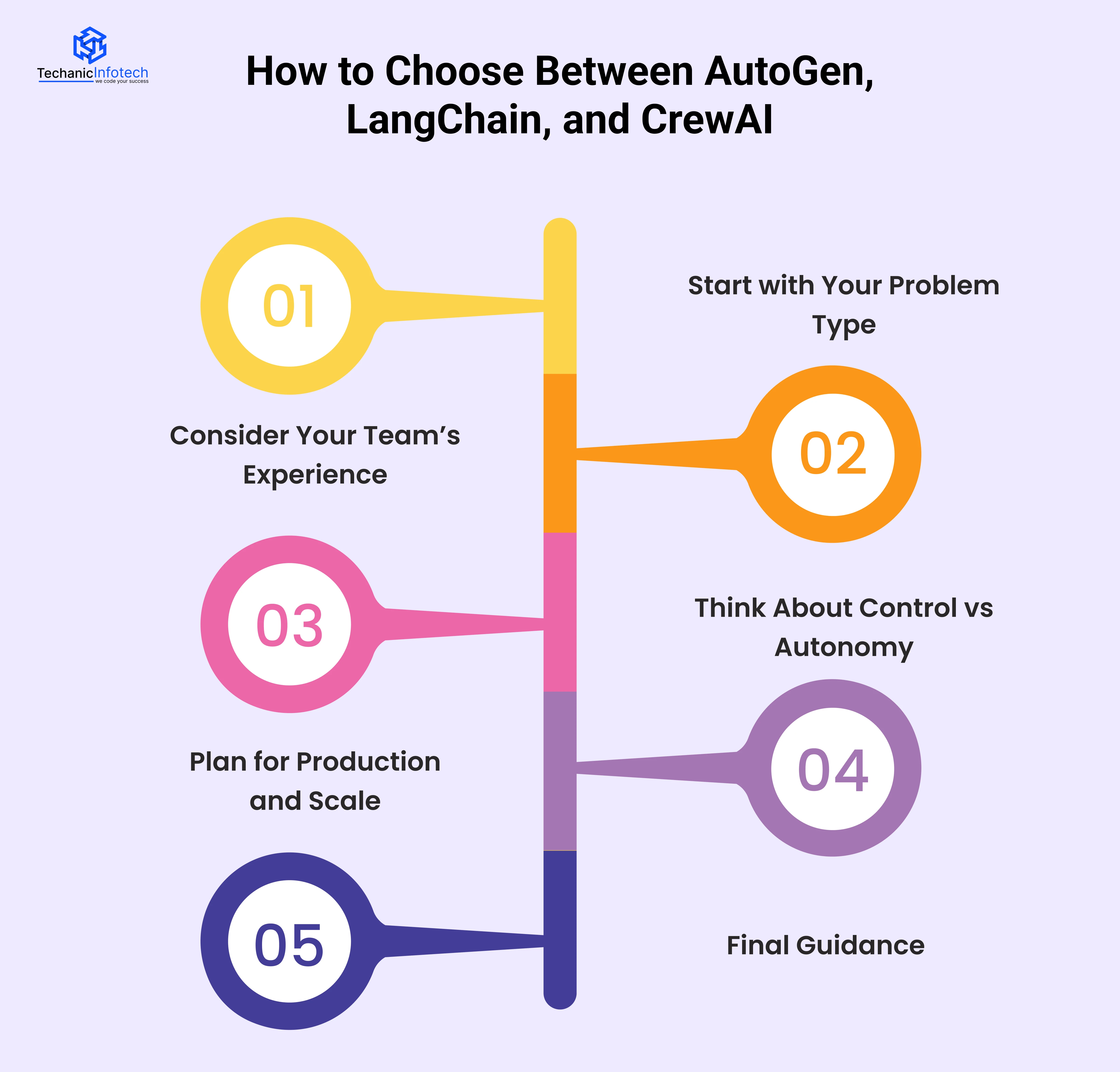

Choosing between AutoGen, LangChain, and CrewAI isn’t about picking the “best” framework, it’s about picking the right one for your use case, team, and business goals.

Each framework shines in different situations, and making the wrong choice early can increase cost and rework later.

If your system requires deep reasoning, exploration, or back-and-forth decision making,

AutoGen is often the right fit. It works well when the path to the solution isn’t fixed and agents need to critique, retry, or collaborate dynamically. Research tools, autonomous coding assistants, and complex simulations fall into this category.

If your problem is more workflow-driven, where steps are predictable and tools must be called in a specific order, LangChain is usually the safer option. It’s ideal for chatbots, RAG systems, internal assistants, and production-grade automation.

CrewAI fits best when your use case involves clear roles and tasks. If you can define who does what, researcher, writer, reviewer, or executor—CrewAI keeps things simple without heavy orchestration logic.

Team skill level matters more than many expect.

AutoGen requires strong prompt design, system thinking, and careful guardrails. It’s best suited for teams with senior AI engineers.

LangChain works well for backend and full-stack teams because it feels familiar, structured, modular, and integration-friendly.

Ask how much control you need.

LangChain offers the most predictable execution.

CrewAI balances autonomy with structure.

If you’re building something that must scale, integrate with existing systems, and stay stable over time,

LangChain is the most production-ready.

CrewAI works well for small to mid-scale systems.

Choose AutoGen for advanced, autonomous reasoning systems

Choose LangChain for reliable, production-ready AI workflows

Choose CrewAI for simple, role-based multi-agent collaboration

At Techanic Infotech, we help businesses move from AI experiments to production-ready systems. Our team has hands-on experience working with modern agent frameworks like

AutoGen, LangChain, and CrewAI, and we don’t push a one-size-fits-all solution.

Our AI app development company focuses on choosing the right architecture based on your use case, team skills, and long-term goals.

From designing multi-agent workflows to optimizing performance, cost, and scalability, we build AI systems that are practical, secure, and ready to scale. Whether you’re comparing frameworks or planning full-scale implementation, we guide you with clarity, not hype.

Choosing between AutoGen, LangChain, and CrewAI comes down to understanding your problem, not following trends.

Each framework has its strengths, AutoGen offers deep agent autonomy, LangChain delivers structure and production reliability, and CrewAI simplifies multi-agent collaboration. The right choice depends on your use case, team experience, budget, and scaling plans.

When evaluated carefully, these frameworks can help you build AI systems that go beyond basic automation and deliver real business value.

A thoughtful framework decision today can save development costs, reduce technical debt, and set a strong foundation for scalable AI systems in the years ahead.

The main difference lies in how they handle AI agents. AutoGen focuses on conversation-driven agent collaboration, LangChain uses structured chains and tools, and CrewAI is built around role-based multi-agent teamwork.

There is no single best option for everyone. AutoGen works best for complex, autonomous reasoning systems, LangChain is ideal for production-ready workflows, and CrewAI suits task-oriented multi-agent setups. The right choice depends on your use case and team experience

CrewAI is a good choice when you need simple, role-based multi-agent collaboration. It works well for research tasks, content workflows, and business process automation without complex orchestration logic.

AutoGen can scale, but it requires careful design and monitoring. Because agent conversations are dynamic, teams must add guardrails to control cost, performance, and unpredictable behavior in production.

The best AI agent framework in 2026 depends on your goals. LangChain leads for stability and integrations, AutoGen excels in autonomy and reasoning, and CrewAI offers simplicity for multi-agent coordination.

Yes. Some teams combine frameworks, using LangChain for orchestration and data handling while experimenting with AutoGen or CrewAI for specific multi-agent tasks.